Published

- 15 min read

Umwelt: The Shape of Meaning

Language doesn’t just carry thought. It bends the space thought moves through.

Jakob von Uexküll’s most famous creature is the tick. Blind, deaf, and largely immobile, it clings to a branch and waits — sometimes for years — for exactly three signals: the scent of butyric acid rising from a passing mammal’s skin, the sensation of warmth, and the feel of a surface to burrow into. Out of everything that exists in a forest — the light, the wind, the chemical complexity of soil and bark and pollen — the tick’s world collapses to three cues. Not because the rest doesn’t exist, but because the rest doesn’t exist for the tick. Its body can detect three things. Its behavioral repertoire can act on three things. And so its world is three things.

Uexküll called this the organism’s Umwelt: not the environment in the objective sense, but the experienced, action-relevant world — the slice of reality that becomes meaningful given a particular body, particular sensors, and a particular repertoire of things to do. The tick’s Umwelt is not a degraded version of ours. It is a different world entirely, defined by a different geometry of relevance.

This idea — that organisms do not inhabit a single shared reality but each live inside a species-specific bubble of meaning — was radical when Uexküll published it in 1934. It remains radical now, because its deepest implication has never been fully absorbed. The Umwelt concept doesn’t just apply to ticks and sea urchins. It applies to us. Humans do not live in raw reality either. We live in world-models — structured by perception, memory, culture, and crucially, by language. And those world-models are not just different in content, the way a French speaker and a Mandarin speaker have different words for the same things. They are different in structure. The shape of meaning itself varies.

This essay describes a research program built on that claim — an attempt to take the Umwelt idea out of philosophy and metaphor and into mathematics. The core hypothesis is specific enough to be wrong, which is exactly what makes it worth pursuing.

The geometry you don’t notice

The idea of “semantic space” is by now familiar in both cognitive science and artificial intelligence. Represent meanings as points in a high-dimensional space. Measure similarity by distance. Do reasoning by vector operations. Word embeddings, sentence embeddings, the internal representations of large language models — all of these live in geometric spaces where proximity is supposed to mean something like relatedness.

But there is a crack in the foundation of nearly all this work, and it runs deeper than most practitioners realize.

Almost every standard operation on these spaces assumes the geometry is Euclidean — flat. Cosine similarity, linear interpolation, the famous vector arithmetic where “king minus man plus woman equals queen” — all of these are operations that behave correctly only if the space is globally uniform. The distance between two points means the same thing regardless of where in the space those points sit. A straight line between two meanings is the shortest path. The geometry doesn’t change as you move through it.

Peter Gärdenfors, in his work on conceptual spaces, proposed something more structured: that concepts are regions in geometric spaces with interpretable dimensions, where similarity corresponds to distance and categories correspond to convex regions. This was a crucial step — it treated meaning as having genuine spatial structure rather than being a flat list of features. But even Gärdenfors’s framework, elegant as it is, typically assumes a fixed, well-behaved geometry within each dimension.

The hypothesis at the center of this project says: that flat assumption is not merely an approximation. It is wrong in a specific, measurable, consequential way. And the way it is wrong tells us something deep about both human cognition and the machines we have built to process language.

Curved meaning

The hypothesis — call it the geometric Whorfian hypothesis — is this: each language, and even each individual’s idiolect, induces a semantic geometry that is not flat but curved. A Riemannian manifold rather than a Euclidean space. A surface where distance depends on where you are and which direction you’re moving, where the local notion of “nearby” stretches and compresses depending on what region of meaning you inhabit.

This requires a moment of unpacking, because the mathematical language can obscure what is actually a very intuitive idea.

A Euclidean space is like an infinite flat tabletop. Distances behave the same way everywhere. A straight line is always the shortest path. If you know the coordinates of two points, you can compute their distance with a single, fixed formula, and that formula doesn’t care where the points are.

A Riemannian space is like the surface of the Earth. Locally — in your neighborhood, on your street — everything looks flat, and Euclidean geometry works fine. But globally, the surface curves. The shortest path between two cities is not a straight line on a map; it’s a great circle, a geodesic that follows the curvature of the surface. If you pretend the Earth is flat and navigate by straight lines, you accumulate errors that grow with distance. The fix is not to draw better straight lines. The fix is to admit the surface is curved and compute accordingly.

The geometric Whorfian hypothesis proposes that semantic spaces behave the same way. Locally, around any given concept, things look approximately linear — the usual vector operations work tolerably well for nearby meanings. But globally, the space may be curved, anisotropic — stretched differently in different directions — and structured into basins, ridges, and folds that correspond to the deep organizational structure of a language. The metric tensor, the mathematical object that describes how distances work at each point, varies smoothly across the space. And that variation is not noise. It is the signature of how a particular language, a particular culture, a particular mind carves up reality.

This is where Uexküll’s tick comes back. The tick’s Umwelt is defined by the structure of its sensory and motor apparatus — what signals become real and what actions are possible. The geometric Whorfian hypothesis says that a language’s Umwelt is defined analogously, by the structure of its semantic geometry — what distinctions become salient, what similarities become obvious, what inferential paths become natural and which become effortful or invisible. Not because some thoughts are impossible in some languages — that crude version of the Whorf hypothesis was rightly criticized. But because the shape of the space through which thought moves is different, and shape constrains motion.

What curvature means, concretely

This is the point where skepticism is appropriate, and I want to meet it directly. “Semantic curvature” is exactly the kind of phrase that can seduce you into thinking you’ve explained something when you’ve only renamed it. Words like “manifold” and “metric tensor” carry an aura of mathematical authority that can substitute for actual evidence if you let them. The history of interdisciplinary work is littered with borrowed formalisms that turned out to be decorative rather than load-bearing.

So the question that matters is not whether the mathematics is elegant. It is whether the curvature is real — whether it shows up in measurements, makes predictions, and outperforms the simpler flat alternative.

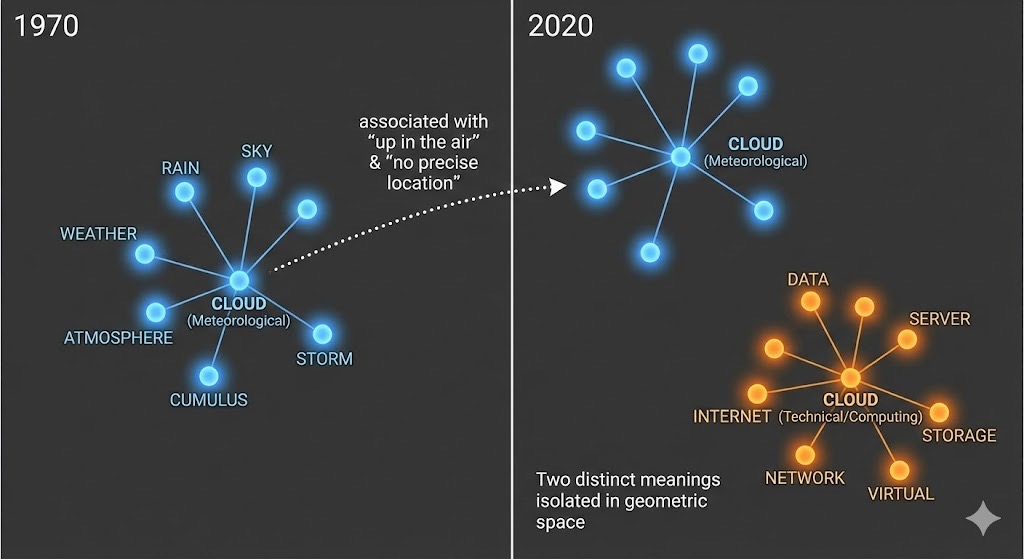

Here is what “real curvature” would look like, concretely. Consider the way different languages partition the color spectrum. Russian makes an obligatory distinction between light blue (goluboy) and dark blue (siniy) that English does not. This is not just a lexical curiosity. Decades of research in cross-linguistic color perception have shown that these linguistic boundaries create measurable effects on categorical perception — reaction time advantages for discriminating colors that cross a linguistic boundary versus colors that don’t. In the geometric framework, this means the semantic space around “blue” has different metric structure in Russian than in English. The distance between light blue and dark blue — measured not by wavelength but by cognitive salience — is larger in Russian. The space is stretched in that region. A Euclidean model that assigns the same metric everywhere will miss this entirely. A Riemannian model that allows the metric to vary locally can capture it.

That is one example. But the claim is general. Wherever a language makes a distinction that another language collapses, or collapses a distinction that another language makes, the local geometry of the semantic space should differ. And those geometric differences should predict measurable downstream effects: differences in similarity judgments, in analogical reasoning, in the errors language models make when translating between languages, in the inferential shortcuts that feel natural in one tongue and labored in another.

The project we’ve built — also called Umwelt — exists to test whether this is true.

Measuring the manifold

The research program has two interlocking components, and the relationship between them is deliberate.

The first is the empirical program itself: a set of hypotheses, experiments, benchmarks, and predictions designed to determine whether semantic geometry is genuinely Riemannian in a way that matters. This means asking questions that have answers. What evidence would count as “semantic curvature” rather than noise or artifact? How stable are geometric estimates across different language models, different corpora, different languages, and different time periods? Which downstream tasks are sensitive to geometry, and which are indifferent? What do we learn about human cognition by measuring the semantic geometry of large language models — and what do we learn about the models by treating them as semantic instruments?

The second is a computational library called Astrometrics. The name is not decorative. Astrometry is the science of measuring positions and motions of celestial bodies — the foundation of observational astronomy. Astrometrics does the analog in semantic space: measuring positions, neighborhoods, distances, trajectories, curvature, and how all of these change under the influence of different languages, different domains, different stages of model training. And the word “metrics” is literally the heart of the matter. We are building machinery for learning and applying the metric of a space — the mathematical object that defines what distance means.

The library exists to make the theoretical hypothesis testable. It extracts and manages embedding representations across models and layers. It estimates local geometry — how distances behave in the neighborhood of a given point. It compares Euclidean and Riemannian notions of similarity and movement. It computes curvature diagnostics. It implements manifold-aware operations — geodesics, exponential and logarithmic maps, parallel transport — in a way that is computationally practical rather than merely ceremonial. And it does all of this under a single governing principle: if we claim a geometric structure exists, we should be able to compute with it and make predictions that outperform flat baselines.

That last clause is the one we are unusually stubborn about.

The discipline of baselines

It is easy to build an impressive geometric story. It is considerably harder to show that story survives contact with real numbers.

The validation layer of this project is where we force ourselves to answer uncomfortable questions. Does the manifold-aware method predict something measurable? Does it generalize out of sample? Does it beat the simplest reasonable Euclidean baselines? Does the effect persist across models, or does it appear only in one cherry-picked architecture? Can we reproduce it after changing random seeds, corpus slices, or evaluation datasets?

We treat these questions as design constraints, not afterthoughts. Out-of-sample testing is mandatory — if a result doesn’t generalize, it doesn’t count. Baselines are sacred — if a Euclidean method does equally well, we don’t get to handwave away the comparison. Ablations matter — if the supposed Riemannian advantage disappears when you remove a single component, that’s information, not embarrassment. Effect sizes take priority over statistical significance, because a tiny but reliable curvature effect and a huge but fragile one tell very different stories. And we insist on testing across multiple models and multiple datasets whenever possible, because single-model results are interesting but cross-model patterns are evidence.

This discipline exists because “semantic geometry” is exactly the kind of concept that can become unfalsifiable if you let it. We do not want a belief system. We want an empirical program. If the geometric Whorfian hypothesis is true, it should show up as a persistent, structural advantage when we use the correct geometry. If it is not true — or is only weakly true — we want to discover that early, loudly, and with receipts.

Why this matters beyond the philosophy

There is a second reason for this project, and it has to do with the machines.

Large language models are not just language toys. They are the first widely deployed systems that behave like semantic engines — systems whose internal operations are, in a meaningful sense, movements through a space of meaning. Under the geometric Whorfian framework, an LLM is not an alien intelligence. It is a distilled, mechanized compression of how human texts carve up reality. And if the geometry of that compression is genuinely manifold-shaped and language-specific, a cascade of practical consequences follows.

Consider training dynamics. If training changes the geometry of a model’s semantic manifold in measurable ways — if certain training stages produce characteristic warping, if domain overfitting has a geometric signature, if the transition from syntactic pattern-matching to genuine semantic competence corresponds to a detectable change in curvature — then we gain diagnostic tools that go beyond loss curves and benchmark accuracy. We gain the ability to compare model checkpoints not just by what they get right, but by the shape of their semantic world.

Consider alignment. The field currently discusses alignment largely in terms of objectives and rules layered on top of a model’s behavior. A geometric view suggests a complementary angle: undesirable behaviors may correspond to reachable regions in semantic space, safe behavior may be a constraint on allowed trajectories, and interventions might be framed as reshaping geometry — smoothing cliffs, widening basins, separating hazardous attractors from benign ones. This is speculative, but it is the kind of speculation that becomes testable once you have the measurement tools.

Consider translation. If different languages induce genuinely different semantic topologies — different folds, densities, and neighborhood structures — then translation is not just mapping words to words. It is mapping regions to regions while respecting geometry: preserving conceptual neighborhoods, preserving entailment-like structure, preserving culturally salient separations that are “far apart” in one language but collapsed in another. Translation becomes a manifold-mapping problem, not merely a sequence-to-sequence problem. And the failures of current machine translation — the places where the output is fluent but subtly wrong in meaning — may be precisely the places where the geometric mismatch is largest.

And consider something more speculative still. Next-token prediction, the engine that drives current language models, is powerful but fundamentally local and syntactic. A geometric approach hints at a higher-level operation: moving not to the next word but to the next coherent semantic region, treating meaning as evolving within a landscape of concept basins, predicting transitions between structured conceptual sets rather than between individual tokens. “Next semantic convexity” is a placeholder phrase, but it names a real possibility — semantic generation guided by the structure of thought rather than the surface of text.

The tick, the model, and the space between

Uexküll’s great insight was that an organism’s world is not the world. It is the world as structured by that organism’s capacities — sensory, motor, cognitive. The tick does not experience a degraded version of human reality. It experiences tick-reality, which is a different manifold entirely, defined by a different geometry of relevance.

The geometric Whorfian hypothesis extends this insight from biology into language and cognition. A language does not provide a neutral window onto a shared reality. It provides a shaped window — a semantic manifold with its own curvature, its own metric, its own geodesics along which thought travels most naturally. English-speakers and Russian-speakers do not merely use different words for the same meanings. They inhabit semantic spaces with different local structure, and those structural differences constrain reasoning, association, analogy, and inference in ways that are measurable if you have the right instruments.

This means that disagreement is not always misinformation. Sometimes it is geometry mismatch — two people navigating the same question through semantic spaces that carve the territory differently. Misunderstanding is not always missing facts. Sometimes it is navigating the wrong manifold. Persuasion is not always evidence. Sometimes it is a forced remapping of distances and saliences — a deformation of someone’s semantic geometry that makes previously distant conclusions feel nearby.

These are large claims. They may be wrong. And that is precisely the point.

We started this project because the idea is too important to leave as metaphor. “Worldview” usually lives in essays, arguments, and therapy sessions. That is appropriate — worldview is a lived thing. But it is also a structural thing, and structure can be measured. The Umwelt project is a bet that we can specify the geometry we claim exists, compute with it, validate it against reality, and use it to build better interpretability and better tools.

Not as a manifesto. As an instrument.

We will publish results as we get them — including the ones that break our favorite stories. The universe does not owe us curvature. But if it is there, we intend to measure it.